Best Practices for Radiation Safety Officer Turnover

By Dr. Zoomie

How to Transition Radiation Safety Officer Responsibilities So Doc, it’s like this – I’m RSO ...

Read morequestions? Call us

By Dr. Zoomie

How to Transition Radiation Safety Officer Responsibilities So Doc, it’s like this – I’m RSO ...

Read more

By Dr. Zoomie

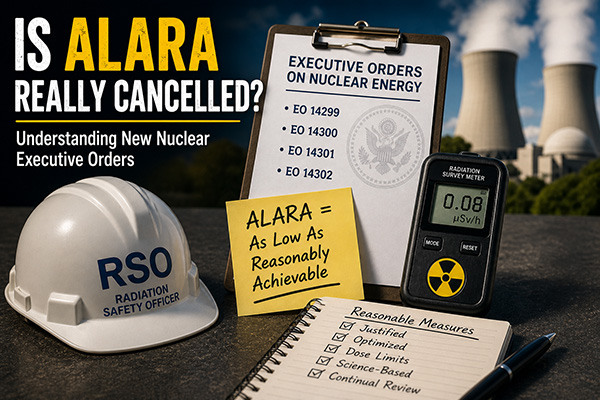

Hey Doc – what’s this I hear about ALARA being cancelled? Is that for real? ...

Read more

By Dr. Zoomie

Hi, Dr. Zoomie – how’re you doing? So here’s my question – I’m always hearing ...

Read more

By Dr. Zoomie

So, Dr. Zoomie – I hear about uranium mining but I don’t really know what ...

Read more

By Dr. Zoomie

Doc! I’m watching Monarch: Legacy of Monsters and in the second episode one of the ...

Read more

By Dr. Zoomie

Dr. Zoomie – so I know that we can make uranium fission and I know ...

Read more

By Dr. Zoomie

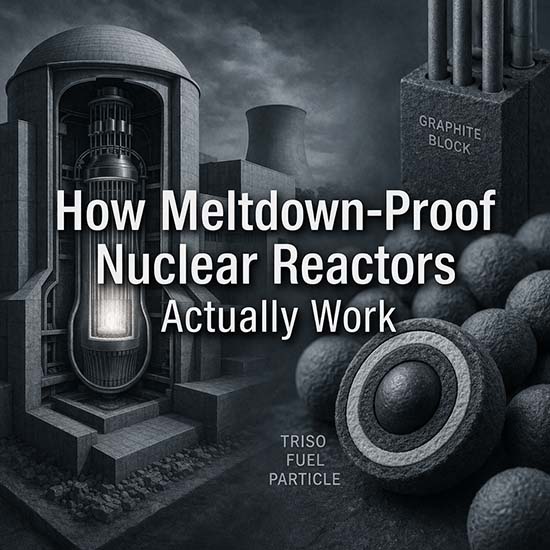

Yeah – with all we’ve heard about reactor melt-downs and what happened at Three Mile ...

Read more

By Dr. Zoomie

Hi, Dr. Zoomie! I’m a part-time RSO and I’ve got to admit that the job ...

Read more

By Dr. Zoomie

Radiation-munching fungus!?! Hi, Dr. Zoomie – I read something about fungus that’s eating radiation at ...

Read more

By Dr. Zoomie

Doc! What’s this I hear that the CIA was setting up a nuclear battery (or ...

Read more